Enterprise AI has never been more hyped or more misunderstood. Boards demand “AI transformation,” vendors promise magic, and internal teams scramble to bolt models onto legacy systems. Yet behind the noise sits a sobering reality: most AI initiatives never make it past the pilot stage, and even fewer deliver sustained business value.

The uncomfortable truth is that most failures have little to do with algorithms or cloud infrastructure. They fail because the organisation isn’t ready — strategy is vague, data is fragile, sponsorship is weak, and change is underestimated.

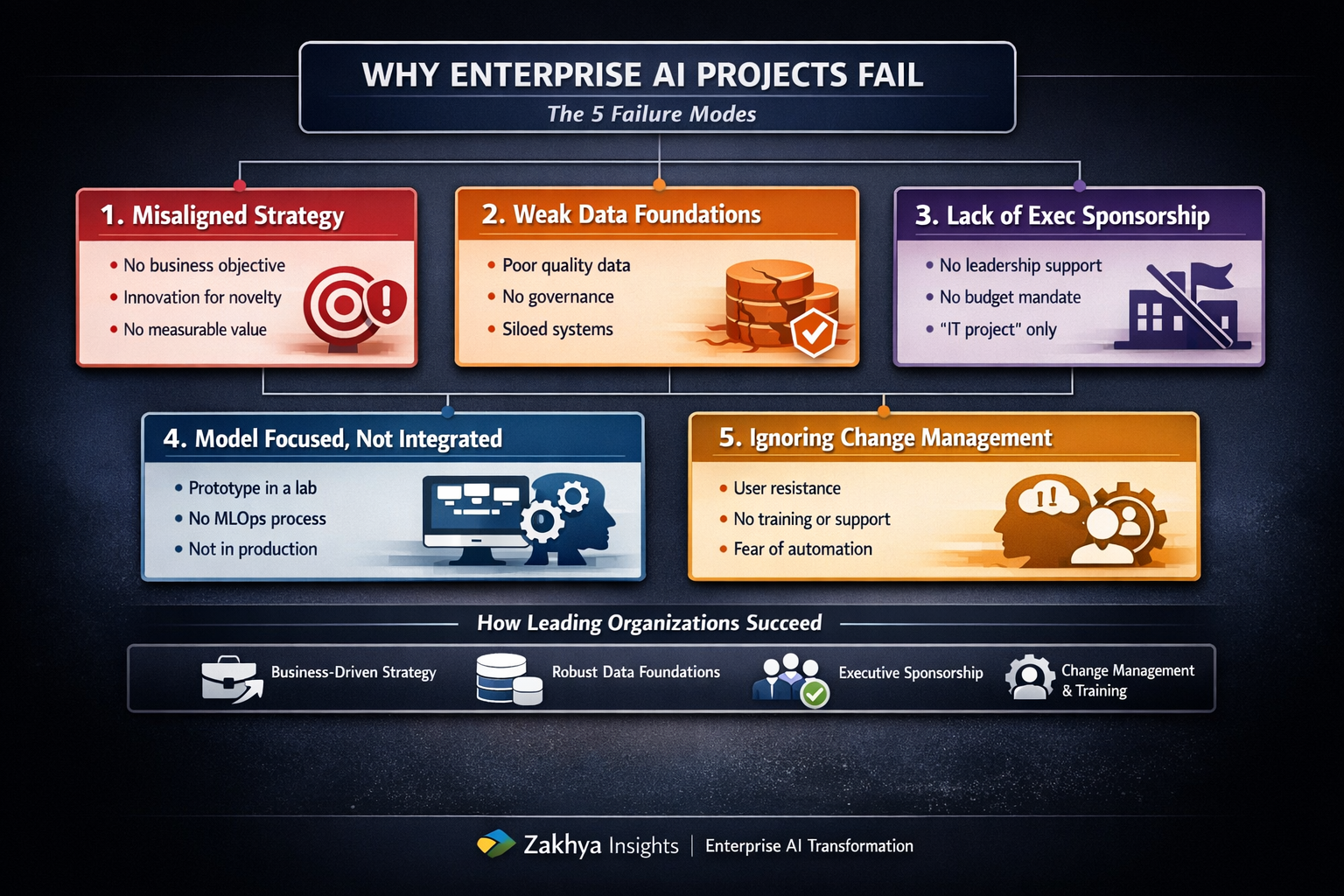

Most AI initiatives stall not because of technology, but because of misaligned strategy, poor data foundations, and a lack of executive sponsorship.

In this article, we break down the five most common failure modes of enterprise AI and what leading organisations do differently to beat the odds.

The five failure modes of enterprise AI

The diagram below summarises the five dominant patterns behind failed AI programmes — and the counter‑moves used by organisations that consistently scale AI.

1. Misaligned strategy: AI without a business problem

Many AI projects begin with a model in search of a use case. A team experiments with large language models or predictive analytics, builds a promising prototype, and then… nothing. No adoption. No budget. No measurable impact.

This happens when AI is treated as an innovation experiment rather than a strategic capability. Initiatives are approved because they are “AI” rather than because they solve a clearly defined business problem.

- Symptoms: No business owner, no P&L accountability, success measured in demos not outcomes.

- Risk: AI becomes a cost centre and a credibility drain.

Leading organisations reverse the logic: they start with a business bottleneck, not a model. Every AI initiative is anchored to a specific outcome, reduced cycle time, higher throughput, lower cost‑to‑serve, improved compliance, and has a named business sponsor who owns the result.

If no one can answer “What changes in the business if this works?”, the AI project isn’t ready.

2. Weak data foundations: AI built on sand

AI is only as strong as the data beneath it. Yet most enterprises underestimate the complexity of their data landscape: fragmented systems, inconsistent schemas, missing lineage, and governance debt accumulated over years.

- Symptoms: Data quality is assumed, not validated; key datasets live in silos; no single source of truth.

- Risk: Models that look impressive in a lab but behave unpredictably in production.

Organisations that succeed with AI invest early in data readiness. They build domain‑oriented data products instead of monolithic lakes, define clear ownership for curation and governance, and treat data as a strategic asset rather than an IT by‑product.

In practice, that means:

- Running data quality and coverage assessments before model design.

- Establishing governance for access, lineage, and compliance.

- Designing reusable data products that can power multiple AI use cases.

3. Lack of executive sponsorship: AI without political capital

AI projects often start in innovation labs or technical teams, far from the executives who control budgets, incentives, and organisational behaviour. Without senior sponsorship, even the best AI solution dies quietly.

- Symptoms: AI is labelled “an IT thing”; funding is piecemeal; adoption is “best effort.”

- Risk: No mandate to change processes, roles, or KPIs; so nothing really changes.

Leading organisations treat AI as a leadership agenda, not a technical experiment. They appoint an executive sponsor for each major initiative, align AI with strategic priorities (customer experience, operational efficiency, regulatory compliance), and create a cross‑functional steering group spanning business, data, engineering, and risk.

When AI has political capital, it can reshape processes, incentives, and operating models; not just dashboards.

4. Over‑focus on models, under‑focus on integration

Many enterprises can build a model. Far fewer can integrate it into production systems, workflows, and decision‑making processes. The result: beautiful prototypes that never leave the sandbox.

- Symptoms: Impressive PoCs, but no live users; manual hand‑offs; fragile scripts.

- Risk: “Pilot purgatory”; endless experimentation with no scaled value.

High‑performing organisations treat AI as a product, not a project. They invest in MLOps platforms, CI/CD pipelines, monitoring, and lifecycle management. They design for workflow integration from day one: where the AI decision lands, who consumes it, and how it triggers action.

A model that isn’t embedded in a workflow is not an AI solution; it’s a demo.

5. Ignoring change management: people aren’t ready

AI changes how people work. It alters roles, responsibilities, incentives, and sometimes identities. Yet change management is often treated as an afterthought; something to “add later” once the model is ready.

- Symptoms: User resistance, workarounds, low adoption, rumours about job loss.

- Risk: Technically sound solutions that nobody wants to use.

Organisations that scale AI understand that adoption is a human transformation disguised as a technical one. They involve users early, co‑design workflows, communicate clearly that AI is there to augment rather than replace, and provide role‑specific training and support.

Early wins are celebrated publicly to build trust and momentum.

How leading organisations beat the odds

When you look at enterprises that consistently succeed with AI, a common operating model emerges. It’s less about secret algorithms and more about disciplined execution.

- Business‑anchored AI strategy: Every initiative starts with a clear, measurable business outcome.

- Strong data foundations: Data products, governance, and quality are treated as first‑class work.

- Executive sponsorship: Senior leaders own the outcomes and remove organisational blockers.

- Industrialised delivery: MLOps, integration, monitoring, and lifecycle management are built in.

- Human‑centric change: Training, communication, and adoption are core deliverables, not optional extras.

The bottom line

AI doesn’t fail because the technology is immature. It fails because enterprises underestimate the organisational, cultural, and operational shifts required to make it work at scale.

The organisations that win with AI are not necessarily the ones with the most advanced models. They are the ones with the most aligned strategy, the strongest data foundations, and the clearest leadership commitment; and they treat people and change as central to the journey.

If you want your AI programme to beat the odds, start by fixing the organisation, not the model.